Course Review: edX "How to Learn Online" — A Software Engineer's Honest Take

I just completed edX's "How to Learn Online" course. It is a free, beginner-level course about study strategies for online learners. Some of it is genuinely valuable. Some of it is filler. Here is what a software engineer who works with AI daily actually got out of it.

New Series: Courses I've Taken

This is the first post in a new series where I review online courses I have completed. Honest assessments, no affiliate links, no sponsorships. Just what I learned (or didn't) and whether the time investment was worth it. If you are deciding whether to take a course, these reviews are for you.

Course At a Glance

| Course | How to Learn Online |

| Provider | edX |

| Duration | 1 week, 4–6 hours |

| Price | Free to audit / $69 for verified certificate |

| Level | Beginner |

| edX Rating | 4.1/5 (101 reviews out of 424,654+ enrollments) |

| Structure | 6 sections, 17 videos, 56 lessons |

Let me point something out immediately: 101 reviews out of 424,654 enrollments. That is a review rate of 0.024%. For context, most consumer products see 1–5% review rates. When fewer than 1 in 4,000 people leave feedback, that is not a rounding error — that is a signal about engagement and completion.

The Verdict (Up Front)

The Good

- ABCDs framework is research-backed and solid

- Metacognition section is genuinely transformative

- Debunks the VARK learning styles myth

- Desirable difficulty concept

- Current AI content (ChatGPT, Claude, Gemini)

- Maria/Cameron case studies ground abstract ideas

The Meh

- 0.024% review rate is a red flag

- Critical concepts buried in wrong sections

- LMS navigation content is obvious

- AI literacy is surface-level for practitioners

- Weekly study plan section feels like filler

The Bad

- AI-generated avatar videos in a course teaching AI literacy

- 60% skimmable for experienced learners

- No practical exercises beyond journal prompts

- "Cognitive offloading" warning lacks concrete strategies

What I Actually Learned

Despite my criticisms, this course changed how I think about learning. Not because of the beginner-level content about "what is a MOOC," but because of three concepts that hit differently when you are already deep in the field.

1. Metacognition: Thinking About Your Own Thinking

The single most valuable concept in this course. Metacognition is the practice of monitoring your own cognitive processes while you are learning — not just absorbing information, but actively asking: "Am I actually understanding this, or am I just reading words?"

As a software engineer, I recognized this immediately. When I am debugging, there is a critical difference between "I am looking at this code" and "I understand what this code is doing." Metacognition is the skill that lets you catch yourself when you have slipped from the second state into the first.

The Key Insight

Since taking this course, I have started asking myself a simple question whenever I hit a wall during development: "Am I actually learning right now, or am I just trying to get this task done?" Those are two different activities with different optimal strategies. When I need to learn, I stop reaching for shortcuts. When I need to ship, I use every tool available. The mistake is confusing the two.

2. Desirable Difficulty: The Struggle IS the Learning

This one rewired my relationship with AI tools. The course presents research showing that productive struggle — when learning feels hard but not impossible — builds stronger neural pathways than passive consumption. Easier is not always better. In fact, easier is often worse for retention.

Think about what this means for AI-assisted development. Every time I ask Claude or ChatGPT for an answer at the first sign of difficulty, I might be completing a task but I am potentially undermining my own learning. The difficulty is not a bug; it is the mechanism by which my brain actually encodes new knowledge.

I have since changed my workflow. When I encounter something I genuinely need to learn (a new API, a new pattern, a new concept), I give myself at least 20 minutes of unassisted struggle before reaching for AI help. When I just need to get something done (boilerplate code, config files, syntax I have written a hundred times), I use AI without guilt. The distinction matters.

3. The VARK Learning Styles Myth Is Dead (Good Riddance)

The course states clearly: the idea that people are "visual learners" or "auditory learners" or "kinesthetic learners" (the VARK model) has no scientific support. None. It has been studied extensively and the evidence says people learn best when they engage material through multiple modalities, not when they match content to some supposed learning style.

I have always suspected this was oversimplified nonsense, and it was satisfying to see a course from edX call it out directly. The next time someone tells you they are a "visual learner," you can inform them the research does not support that claim. Use it wisely.

The ABCDs Framework

The structural backbone of the course is the ABCDs framework for online learning success. It is not revolutionary, but it is well-organized and research-grounded.

- A — Awareness: Understanding your own learning process. This is where metacognition, active learning, and learning preferences live. The core argument: you cannot improve a process you do not observe.

- B — Balance: Managing yourself as a whole person while learning. Self-regulation, stress management, growth mindset. The sleep and exercise section is backed by strong evidence — sleep literally consolidates memory.

- C — Community: Learning with others. Discussion forums, study groups, peer learning. The course correctly identifies that the biggest challenge of online learning is isolation, and community is the antidote.

- D — Dedication: Evidence-based study techniques. Retrieval practice, spaced repetition, interleaving, the SQ3R method. This is where the science is strongest and most actionable.

The framework works because it covers the full surface area of what makes online learning succeed or fail. Most courses about learning focus only on study techniques (the D). This one acknowledges that self-awareness, wellbeing, and social connection are prerequisites, not extras.

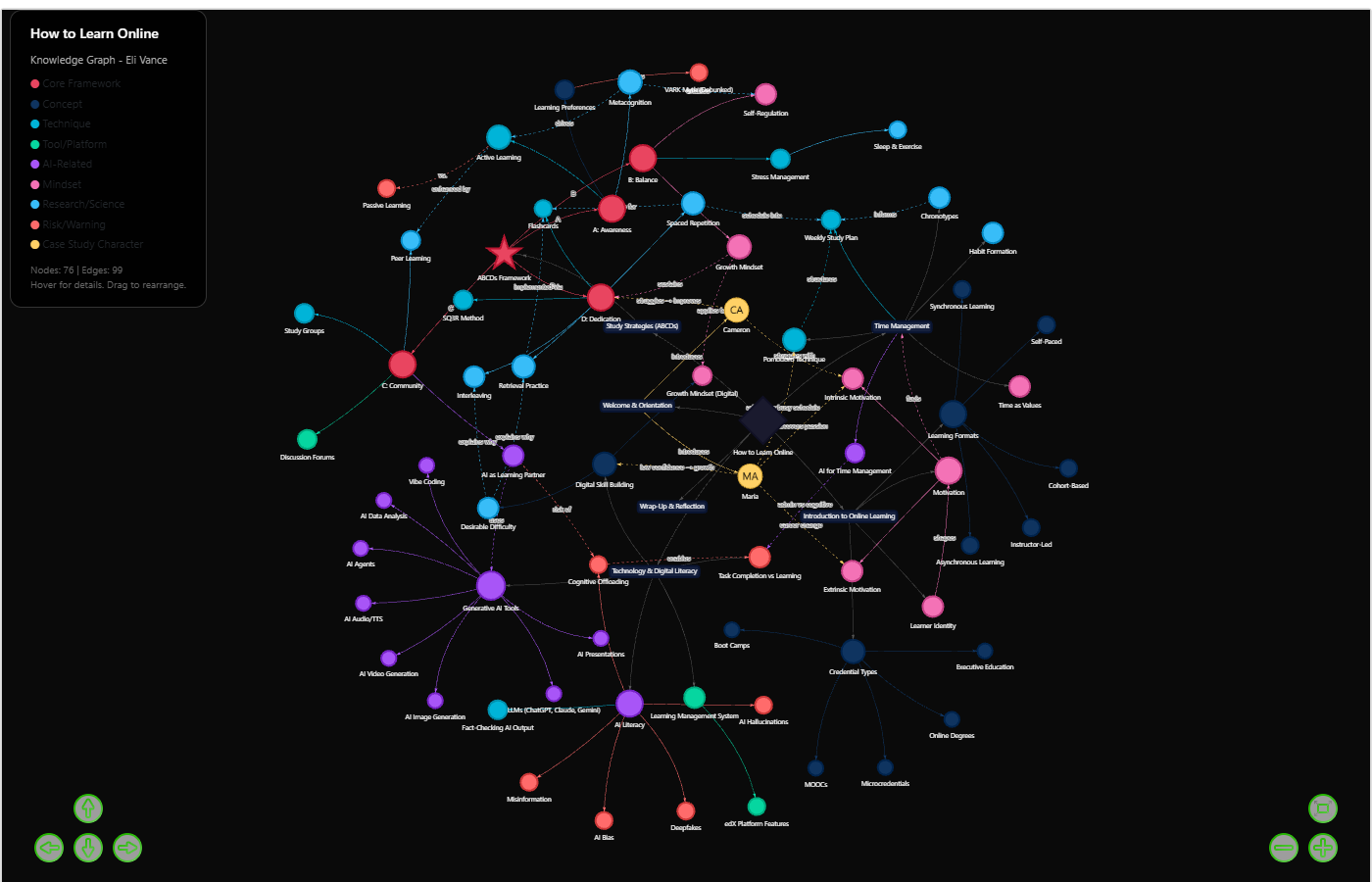

The Knowledge Graph

To map how all the course concepts relate to each other, I built an interactive knowledge graph. 76 nodes, 99 edges, covering every major idea in the course and how they connect.

Building this graph was more educational than most of the course itself. When you force yourself to identify every concept and explicitly define how they relate to each other, you discover structure that passive reading misses entirely. For example, the "Cognitive Offloading" node (AI overreliance) connects directly to both "Task Completion vs Learning" and "AI as Learning Partner" — the course treats these in separate sections, but they are fundamentally the same tension viewed from different angles.

The AI Content: Surface-Level but Current

The course covers generative AI in seven categories: LLMs (ChatGPT, Claude, Gemini), image generation, video generation (HeyGen, Synthesia), audio/TTS, presentations, autonomous agents, and "vibe coding." It mentions AI-assisted programming as a category, which is more current than most academic content I have seen.

For someone new to AI, this is a reasonable overview. For someone who works with these tools daily, it is surface-level. The AI literacy section covers important topics — hallucinations, bias, deepfakes, misinformation — but does not go deep enough to be actionable.

The Irony

The course uses AI-generated avatar videos (built with HeyGen) for its module review summaries. A course that teaches students to be critical of AI-generated media uses AI-generated media in its own delivery. I do not think this is necessarily wrong — the technology is legitimate — but it is an odd choice that goes unacknowledged. If you are teaching AI literacy, acknowledging your own use of AI tools would have been a strong modeling opportunity.

The most useful AI-related concept in the course is "cognitive offloading" — the risk that over-reliance on AI tools reduces your own cognitive capability. The idea is sound and backed by research. But the course stops at the warning without providing concrete decision frameworks for when to use AI versus when to struggle through manually. That gap is exactly what practitioners need.

The Task Completion vs. Learning Distinction

This is the single most important idea in the entire course, and it is criminally buried in Section 3 instead of being the opening argument.

The distinction is simple: task completion is about getting something done efficiently (shortcuts are fine), while learning is about building understanding (shortcuts undermine the goal). These require different strategies. When you conflate them, you end up using AI to "learn" — which means you complete the task but encode nothing.

This concept deserves its own module, not a sidebar mention. It is the single most relevant idea for anyone using AI tools in a learning context, and the course treats it like a footnote.

Study Techniques Worth Keeping

The Dedication section contains evidence-based study techniques that are well-presented and genuinely useful. Here are the ones I have adopted:

Spaced Repetition

Reviewing material at increasing intervals: Day 1, Day 3, Day 7, Day 14, Day 30. This combats the forgetting curve and is dramatically more efficient than cramming. I have started applying this to new APIs and frameworks I learn — instead of reading the docs once and forgetting, I revisit key concepts on a schedule.

Retrieval Practice

Testing yourself on material rather than re-reading it. The research is clear: re-reading feels productive but is not. Attempting to recall information from memory — even when you fail — strengthens the memory pathways more than passive review.

Interleaving

Mixing different topics or problem types during study sessions. It feels harder (there is the desirable difficulty again) but produces more flexible, transferable knowledge than studying one topic exhaustively before moving to the next.

My Five Personal Takeaways

- Metacognition is my new obsession. I now actively monitor my own learning process. When I am coding and hit a wall, I ask "am I actually learning or am I just trying to get this task done?" The answer determines my strategy.

- 66 days to form a habit. The research says it takes an average of 66 days (range: 18–254 days) to form a new habit. I have started tracking my learning habits against this benchmark instead of the popular "21 days" myth.

- Desirable difficulty changed my AI usage. I have stopped reaching for AI assistance at the first sign of struggle. The struggle IS the learning. I now give myself 20 minutes of unassisted work before opening an AI chat for genuinely new concepts.

- Chronotypes matter for deep work. I do my deepest, most complex work in late mornings. I have reorganized my schedule to protect that window for learning and hard problems, pushing routine work to my afternoon energy dip.

- The VARK myth being debunked. I always suspected "I'm a visual learner" was oversimplified. The research confirms it. Multi-modal engagement beats single-modality matching every time.

Who Should Take This Course

Take it if...

- You are new to online learning and want a structured introduction

- You have never heard of metacognition, spaced repetition, or retrieval practice

- You want a research-backed framework for study strategies

- You have 4–6 hours to spare and the price is free

Skip it if...

- You are already familiar with evidence-based study techniques

- You work with AI daily and want depth on AI literacy

- You are looking for practical exercises, not journal prompts

- You are considering paying $69 for the certificate — it is not worth it unless you need it for a specific institutional requirement

The Bottom Line

"How to Learn Online" is a solid introductory course that punches above its weight in a few key areas (metacognition, desirable difficulty, debunking VARK) and fills time in others (LMS navigation, weekly planning templates). The ABCDs framework is a useful mental model I will keep. The AI content is current but shallow.

If you are already an experienced online learner or AI practitioner, you will skim 60% of this course. But the 40% that lands — especially the metacognition and desirable difficulty material — is genuinely worth the 4–6 hour investment at the free tier.

The Maria and Cameron case studies are well-constructed. Maria is a 40-something restaurant manager changing careers — high discipline, low tech confidence. Cameron is a 20-year-old digital native on a break from college — high tech skills, low motivation. Seeing abstract concepts applied to these two different profiles makes the framework tangible. It is the kind of instructional design that separates "good enough" from "actually useful."

Eli's One-Line Review

A free course that teaches you the difference between feeling productive and actually learning — and that difference alone is worth more than most $200 courses I have seen.

This is the first post in my "Courses I've Taken" series. Every review is my honest assessment — no sponsorships, no affiliate links, no pressure to be nice. If a course wastes your time, I will say so. Follow my journey as I learn new skills and build tools with Brian at Actyra.